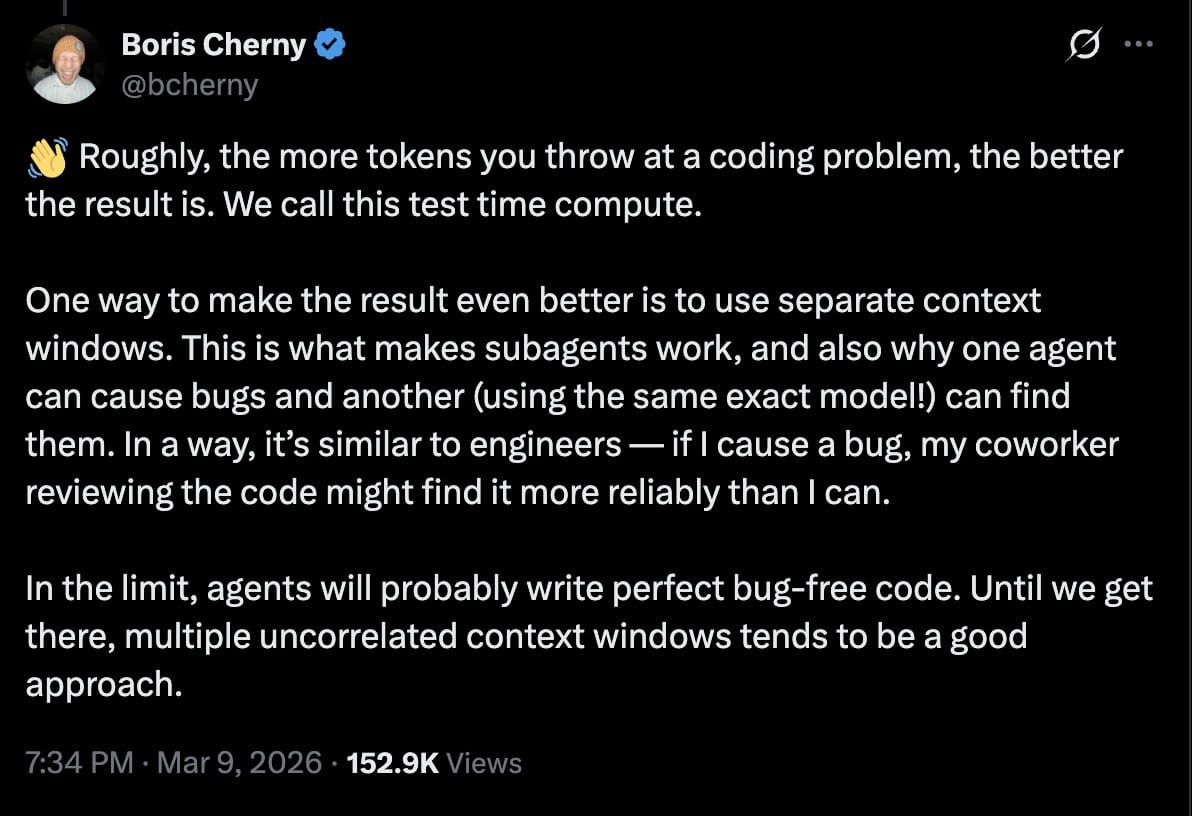

Boris Cherny tweeted something I had so many thoughts about, I didn't even know where to begin.

The Head of Claude Code at Anthropic wrote that roughly, more tokens thrown at a coding problem produces better results and that separate context windows are why one agent can catch bugs another created.

He's not wrong.

He's not wrong about the observation, but I think the framing becomes dangerous if it's taken as doctrine for engineering that just suffices (at face value), as opposed to treating it as a tactic.

Yes, when one agent generates code and a different context reviews it, you get a relatively less biased opinion. And yes, more inference time can improve outcomes on well-specified tasks.

The problem, however, is all of the implications surrounding this tactic.

This feels a bit anti-engineering

"More tokens = better result" is a model-centric view of a systems-centric problem.

And that framing can easily be interpreted as a philosophy. As a standard we just accept.

If code quality depends on "throw more tokens at it" and "spin up more contexts," the center of gravity could move away from design quality, explicit standards, deterministic checks, and accountable review.

And more inference time doesn't seamlessly solve for the verification issue in agentic coding. Using the same AI system and platform to generate and review code creates confirmation bias by design.

A model reviewing its own outputs (even with a fresh context!) doesn't have genuine independence. It shares the same training distribution, the same learned associations, and the same potential blind spots.

Generation capacity and validation (and/or optimization) capacity are different problems. More compute addresses the former. So there are many underlying issues this points to.

3 negative implications

For engineering leaders, "throw more tokens at it" creates a dangerous illusion of leverage. Local productivity metrics may improve. But governance, traceability, and root-cause clarity get worse at the same time... and Amazon is already showing for it.

For software companies, if the need for code quality improvement is discovered late, the organization pays in incidents, slower maintenance, security exposure, and weaker defensibility when customers or auditors ask questions (and lose trust).

Point in case: Amazon

For individual engineers, the potential failure mode is "deskilling-by-abstraction".

If the proposed workflow from Boris' reasoning both teach and normalize that loop (generate, retry, fork more contexts, hope the reviewer-agent catches it etc.), then we're being trained to supervise output volume as opposed to understanding system behavior.

This also collapses code optimization and code review into a self-certification process unless code integrity is preserved a bit better through independent systems for those tasks.

What kind of problem is this, really?

It seems as though many factors and sources contribute to this problem. Someone... something is the culprit here.

I'd rank the potential causes like this:

Verification architecture. The industry is significantly better at producing candidate code than proving that the code is safe, maintainable, and compliant. More compute doesn't fix this. Is this an LLM architecture issue? An overall AI system design issue? This is foundational and the onus here is not on the users of this tech.

Product incentives. Vendors can easily sell visible code generation wins (and they do!). "Your agent writes better code now" is a demo. So that's what gets built and marketed.

Workflow/process design. Engineering teams tack agents onto weak SDLCs instead of strengthening their org and team processes. Throwing AI at engineering processes exacerbates any pre-existing mess they begrudgingly managed to work through prior.

A more holistic, accountable framing?

I don't know the answers yet as it relates to transformer architectures and agents, but I have a feeling deterministic solutions will be explored, both old and new.

Independent code review that does not grade itself. The same system that generates the code shouldn't be the sole arbiter of its quality.

Code guardrails - enforcement during code generation, code review, anywhere, everywhere... Verification works when it checks against something defined. Enforcement before merge.

Deterministic quality gates where possible. Automation is still powerful. Use it! Not everything should be a stochastic retry.

Traceable reasoning, process observability, and ownership.

Someone has to do the dirty work here. Someone has to assess what's suboptimal in agentic engineering processes; what needs optimization. Having a trail of evidence will be critical here.Agents as amplifiers of your judgment, not a complete swap of the engineering discipline that has allowed us to bring incredible, innovative software to the world.

If quality depends on "bring enough tokens and hope something passes," what happens when the task is underspecified? When the correctness criteria are ambiguous? When the risk is actually a design decision that looks fine, locally, yet creates brittleness later?

Strong engineering discipline is completely different from simply maximizing compute. My worry is when/if the industry treats "tactics" as an engineering standard.

I write about AI code governance and engineering org strategy for adopting AI. This is my personal take as a developer and hopeful pragmatist about emergent tech (that's not an oxymoron) 😁.